I am a Senior Researcher & Lecturer at the Computer Graphics Laboratory of ETH Zurich, and a Research Consultant at Disney Research. I am leading the Digital Character AI projects at CGL. My research interests include conversational digital characters, affective computing, human-computer interaction, and applied machine learning.

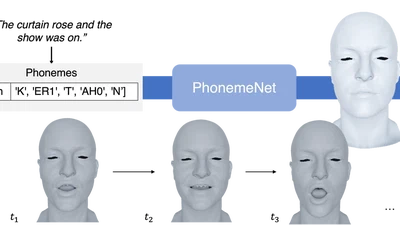

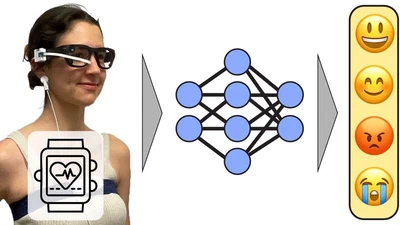

My vision is to create intelligent digital humans that can naturally communicate, understand, and support people across domains such as education and mental health. My research focuses on multimodal artificial intelligence for interactive digital humans, developing models that combine large language models, affective computing, and data-driven animation to create embodied conversational agents endowed with autonomous agency, consistent values, and beliefs.

My work bridges machine learning, human–computer interaction, and computer graphics to enable AI systems such as Digital Einstein and interactive patient avatars for psychotherapy training and health education.