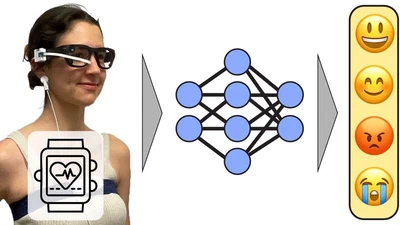

egoEMOTION: Egocentric Vision and Physiological Signals for Emotion and Personality Recognition in Real-World Tasks

A new multimodal dataset and architecture combining egocentric vision and physiological signals for in-the-wild emotion and personality recognition, presented at NeurIPS 2025 …

m.-jammot